It was an August Friday, there was a sense of urgency prior to the blackout: people skipping around starting their weekend or at least the endorphins were being released with the anticipation that R&R was imminent for those that had the weekend off.

This Friday was different, just before 5pm trains halted and traffic lights glitched in central London- there was a metaphoric handbrake placed on everyone’s journeys. A blanket of darkness swept over parts of England & Wales on the late afternoon of 9 Aug. 2019. Chaos engulfed the regular journey home for many London commuters whether they were sat in their cars or were wading through busy mainline stations.

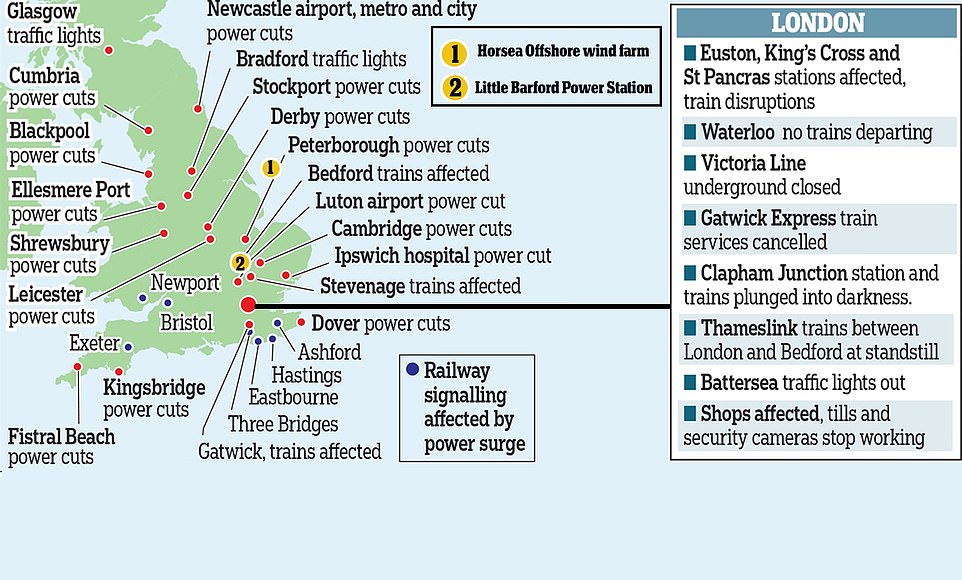

Walking through Cardiff around the same time you’d hear masses of security alarms going off like a Mission Impossible movie. Newcastle homes and businesses were affected and the local airport announced flight cancellations. Not all of the power outages on that day can be attributed to the causes discussed herein, the recorded blackouts are visible in the map below.

Power cuts registered on 9-8-19

You might be thinking ‘how can a train problem in London Euston simultaneously affect traffic lights in Bradford but have no significant power disturbances in between?’ Diverse areas of England and Wales, in terms of proximity, and with disparate tenures were affected that Friday. I was in Oxford and had no inkling, no horror movie like lights out or quietness as my fridge cutout conveying powerlines downing. The question may not bother you longer than a few seconds, because you know about the National Grid, right. You may have mapped out a plan that if it ever happened you’d start walking home and if it went on for hours you’d be forced into eating the contents of your fridge before they spoiled.

If you want to understand a little bit more about your electricity supply and get an insight into what is keeping the WiFi signal alive here’s a high level intro to the events of that evening.

Along your read there is a bit of technical knowledge needed regarding AC power network. Where power demand is greater than power generated then the frequency falls. If the generated power is greater than the demand then the frequency will rise. Once the frequency fluctuates to a level outside the set tolerance 50Hz +/-1% any service or appliance connected to the grid will experience instability and/or damage. Hence, frequency changes are monitored and balanced meticulously by controlling demand and total generation. There’s a website here if you want to see what is happening right now.

Watt happened then?

1.1m customers experienced a problem, blackouts on this scale are rare. Even the energy watchdog Ofgem demanded a report. It was released a couple of weeks ago and some of the findings are mentioned here.

Key Connected Personnel

Did the fact that it was 5pm on a Friday and certain connected people had started their weekend have anything to do with it? Only in terms of operational communications. There’s a protocol stating a sequence of communications must be released. Owing to the incident being on a Friday it is believed that certain key members were not readily available however it’s a red herring to believe this had an impact on the nationwide extent of the powercut. The important decisions were left to the Electricity System Operator (ESO) control office that manages the response in such situations.do trains stop during a electricitytrains stop

Electricity demand

The ESO had forecast demand, expecting it to be the same as the previous Friday. Post-event analysis whereby the demand profiles for the two days were mapped shows almost identical dips and rises. Nothing to point the finger at here. It’s not like Love Island airing and causing a surge in demand like earlier on in 2019. That particular increase in demand caused the grid to switch onto coal-powered generation after the longest stint of fossil-free power generation. (Like we needed a reason to dislike that program.) Incidentally, that record still stands at 18 days, 6 hours and 10 minutes. To date this year, we have prevented 5m tonnes of carbon dioxide(source = Guardian) being released into the atmosphere. Greta would be pleased to know.

The electric generation for the day was as expected, humans are creatures of habit so consumption was predictable, and it was known that neither wind nor solar was going to break any records. The generation mix was as per any regular day in August.

The Weather

Did the weather have to do with it? The ESO control room is provided with lightning strike forecasts. It comes from the Meteogroup in the form of geographical region stating the likelihood of a strike, represented on a scale of 1 to 5. A few minutes prior to the strike, the details sent across were ‘1’ signifying that the highest risk of lightning was predicted practically everywhere in England. Within the two hours prior to 5pm the main land UK had 2,160 strikes. So when the lightning strike on the transmission circuit occurred hitting a pylon near St Neots, it wasn’t a surprise.

Lightning strikes are routinely managed as part of everyday system operations. The eventuality is factored in by the ESO. The Protection System detected the fault and operated as expected within the 20 seconds time-limit, as specified by the grid code. The embedded generation which was part of the response to the strike, had a small issue on the distribution system. A 500MW in reduction of generated electricity was recorded. The monitoring system calculated the frequency, on the grid, which was in tolerance and any voltage disturbances were within industry standard, the Loss of Mains protection that was triggered was on cue. The ESO state this was all handled as expected in the response to the lightning event, and the incident was handled to restore the network to its pre-event condition.

The catalyst to the wide scale power problems was the unrelated independent problems that occurred at two separate sites just minutes later. Not one but two disparate power plants had a problem at nigh on the same time.

An off-shore wind farm (Hornsea) and a gas power station (Little Barford) meant the National Grid lost a combined 1691MW, as these two sites started under generating. (For the record these losses are attributed to the consequences of the lightning strike but the industry is asking the question of compliance, nothing is clarified yet). These generators fell off the grid and the frequency fell. As demand was now greater than the electricity generated the frequency fell below the tolerance value. To correct the frequency, the ESO did its job by prioritising disconnection of major load, it had to reduce demand by 1000MW. This equated to 5% of the consumption at that time, hopefully you were in the protected 95% that was kept powered !

Why wasn’t generation increased?

Reserves are already part of most plants, the solution would be to have more reserves available, right. Yes, but it is cheaper to just turn-off the load. It is also instantaneous, not too unlike having an overload on your UPS, you react by unplugging the load and then contemplate whether you need a higher capacity UPS. Not every power source can produce enough energy to stabilise the ‘demand-generation’ equation. Ramping up generation represents a significant outlay, sometimes the costs are inexact particularly when considering solar/wind plants due to forecast uncertainty and lest we forget every power plant is a business that needs to make money.

A note about renewable energy: the National Grid supply was originally set-up for fossil-fuel, the integration of renewable energy into the system is not simple. There are technical discussions relating to inertia, stability, ongoing compliance monitoring that needs to be addressed by policy makers and operators etc. before we see large scale deployment. 30% deployment seems to be the uptake globally on average in any country, more than this will require changes to system operations and market designs. Comforting to know that the National Grid is already being adapted and is expecting to be a carbon-free grid in the next 6 years.

Reducing demand

Each geographical region of the country is unique. The frequency recovery in different area is dependent on the transmission voltage, the transmission lines, energy generation and voltage support etc. Routine maintenance is carried out on circuits and equipment rendering them out of service. Simply put, each region will react differently when the demand and generation is altered. The ESO is set up to manage faults and control demand. This is done in a predetermined manner based on knowledge about the limitations of all these region, those that lost power were scheduled to lose power.

Large users of electricity actually know the score when they connect to the grid. In these situations, the ESO will trigger the Distribution Network Operators (DNOs) to power-down companies who have contracts agreeing that they can have their energy cut-off to stabilise the grid i.e. balance the frequency. It doesn’t matter if it’s peak time in London’s Stations the agreement is to ‘pull the switch’ to non-essential supplies. The ESO signals to the DNO to stop powering those companies when it needs to control demand. The agreement is to cut-off for 30 minutes.

Further delays experienced by London’s commuters past this half an hour is reported to be the result of those companies having to restart their systems after the period of being cut-off. Certain new class of trains needed technicians to manually reboot approx. 80 trains on site and individually. On occasions the train companies had shifted supply to backup power but then when the grid was back the trains had complications switching onto grid power.

Companies would rather have the power cut than sustain long term equipment damage. Even so, it is unacceptable to the trainline operators and they did demand answers as the scale of disruption was phenomenal.

The report suggests that the ‘critical loads’ were affected for several hours because of their customers’ system’s shortcomings as the DNOs had only pulled the switch for 30 minutes. It also suggests that no critical infrastructure or service should be placed at risk of unnecessary disconnection by DNOs.

There are plans afoot to address the shortcomings highlighted by the report, we can only wait to see whether a powercut on this scale reoccurs. Modern technology can only facilitate improvements. Many of us have Smart Meters installed, the data these feedback will allow smart management. These would give the DNOs opportunity to improve reliability and switch-off only the non-critical loads when their network is being put under stress. Hey, you didn’t believe that those temperamental meters were just a freebie for you to cut-back usage and reduce your fuel bills, did you?

Sources: https://www.ofgem.gov.uk/system/files/docs/2019/08/incident_report_lfdd_-_summary_-_final.pdf

twitter #UK_coal

Ofgem